Meteorologists have to be able to be self-reflective and open to constructive criticism after weather events with serious societal impacts

Mar 09, 2026

I am going to start off this post with a disclaimer: this is probably going to be one of my more controversial articles, and as such I want to be sure that readers understand that what I am saying here is based on my own experience and perspectives. I am certainly open to other thoughts and perspectives — and have opened up comments to all readers and would be happy to engage in respectful conversation. The other thing I want to make clear right off the bat is that my commentary here is made in the context of deep respect for everyone involved in the meteorological community that provides warnings and forecasts for severe weather — but also with the recognition that this work is literally a matter of life and death, and as such I think it important that we in the community critically evaluate our work and frankly share our perspectives.

Yesterday, several people shared with me this breaking news item that Democratic Michigan Governor Gretchen Whitmer was calling for a federal investigation into why no tornado watch was issued for the series of tornadoes in southwest Lower Michigan Friday afternoon that killed 4 people. Specifically, the governor wants to know whether the failure to issue a watch was related to the federal cuts to NOAA and NWS that I have been documenting for the last year.

My colleague Dr. Sean Ernst, the University of Oklahoma scientist I interviewed recently about his work on the Storm Prediction Center’s new Conditional Intensity Group (CIG) forecasts, has an excellent BlueSky thread about his thoughts on Gov. Whitmer’s announcement that I encourage you to read. He starts off the thread by saying “although the knee-jerk reaction might be to circle the meteorological wagons, we should take this moment to be more introspective and self-critical.” While I of course recognize there are political reasons for the governor’s statement, the fact is that we meteorologists should be able to see that there are valid reasons for concern about this event — and I hope that this piece will help the process of examining what transpired.

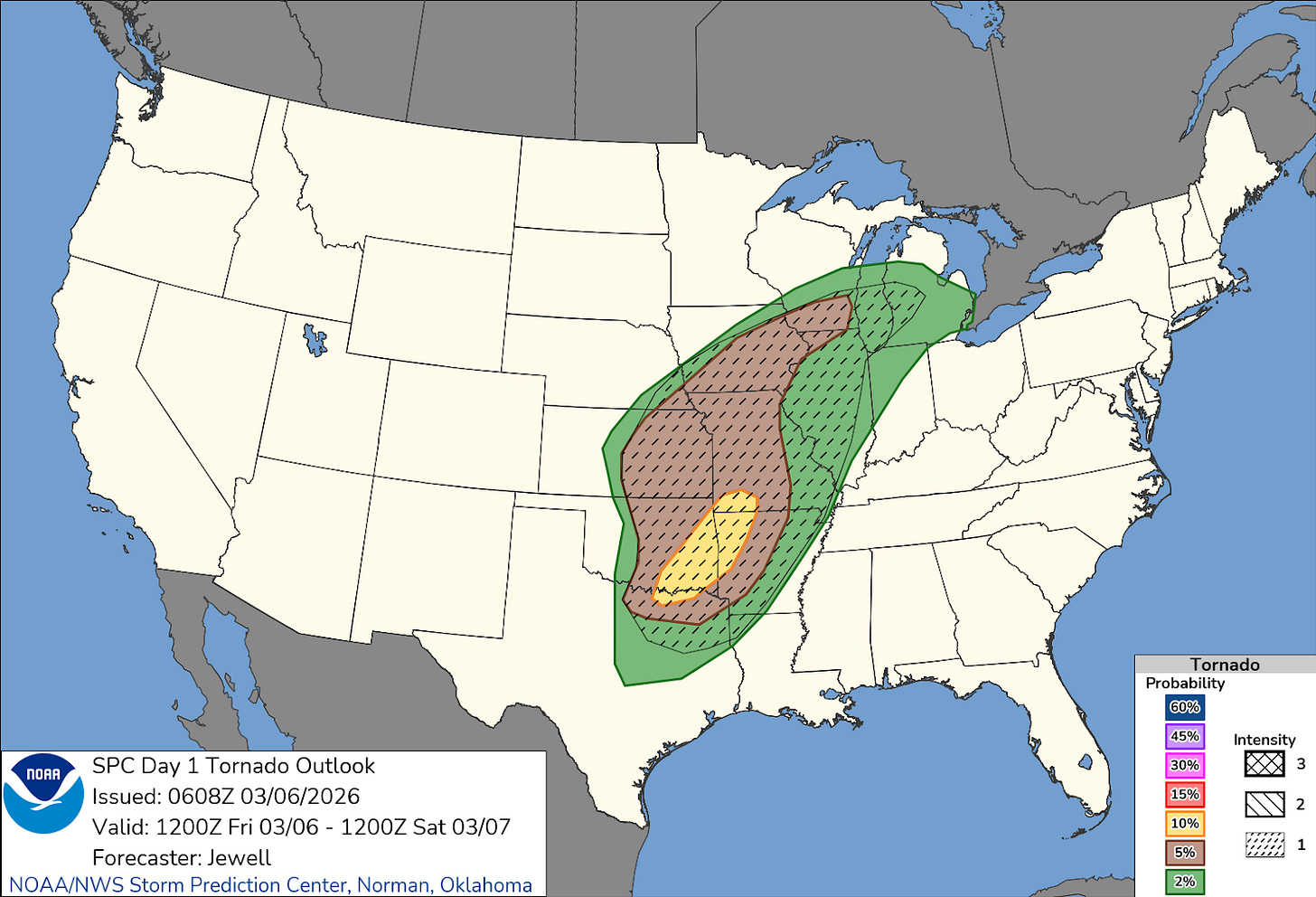

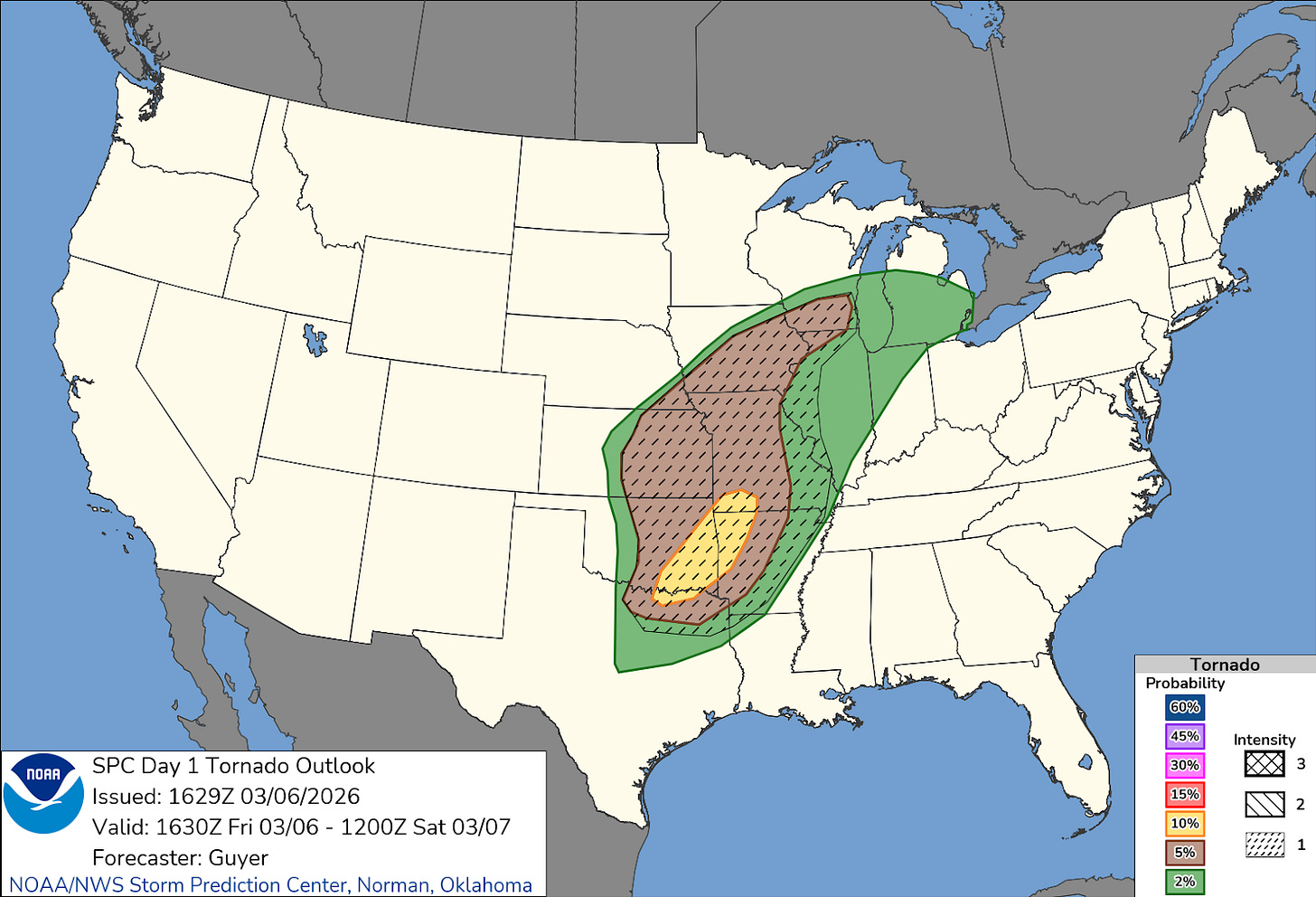

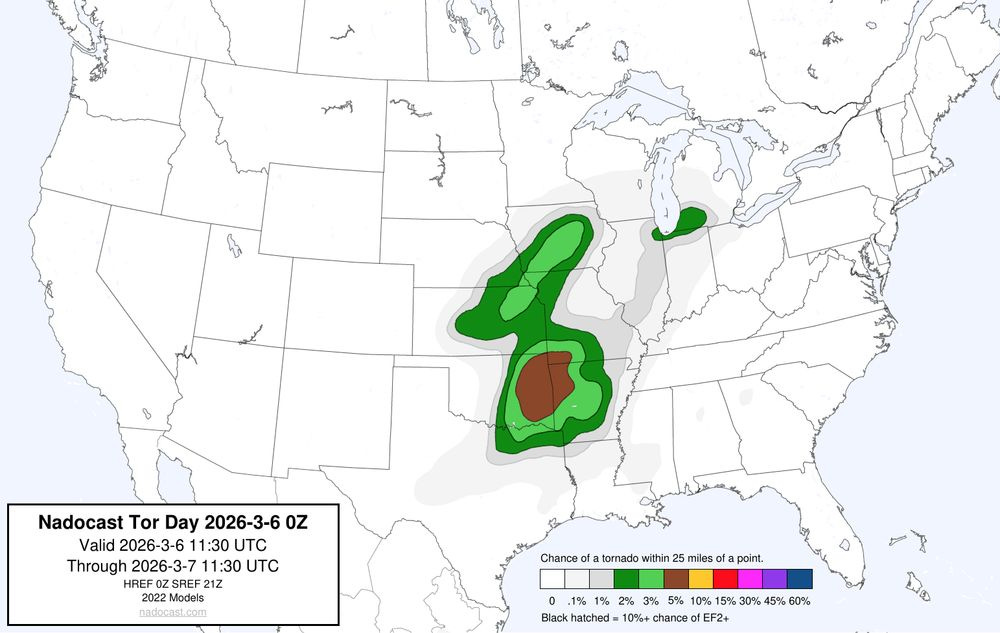

So let’s start by looking again at the actual forecasts leading up to the event. Above is the initial day 1 tornado probabilistic outlook issued around Midnight Friday morning by the Storm Prediction Center (SPC). The area of southwest Lower Michigan was in a 2% area of tornadoes — which is the lowest probability that can be shown by SPC and means that any point within that area has a 2% probability of seeing a tornado occur within 25 miles of it (meaningful probabilities for severe weather are inherently much lower than probabilities of precipitation because phenomena like tornadoes are much rarer). The “categorical” severe weather risk was marginal, level 1 of 5. A “CIG1” area that shows a risk for a strong (EF2+) tornado came into parts of Lower Michigan close to the area where the tornadoes would impact later that day — but just to the northwest.

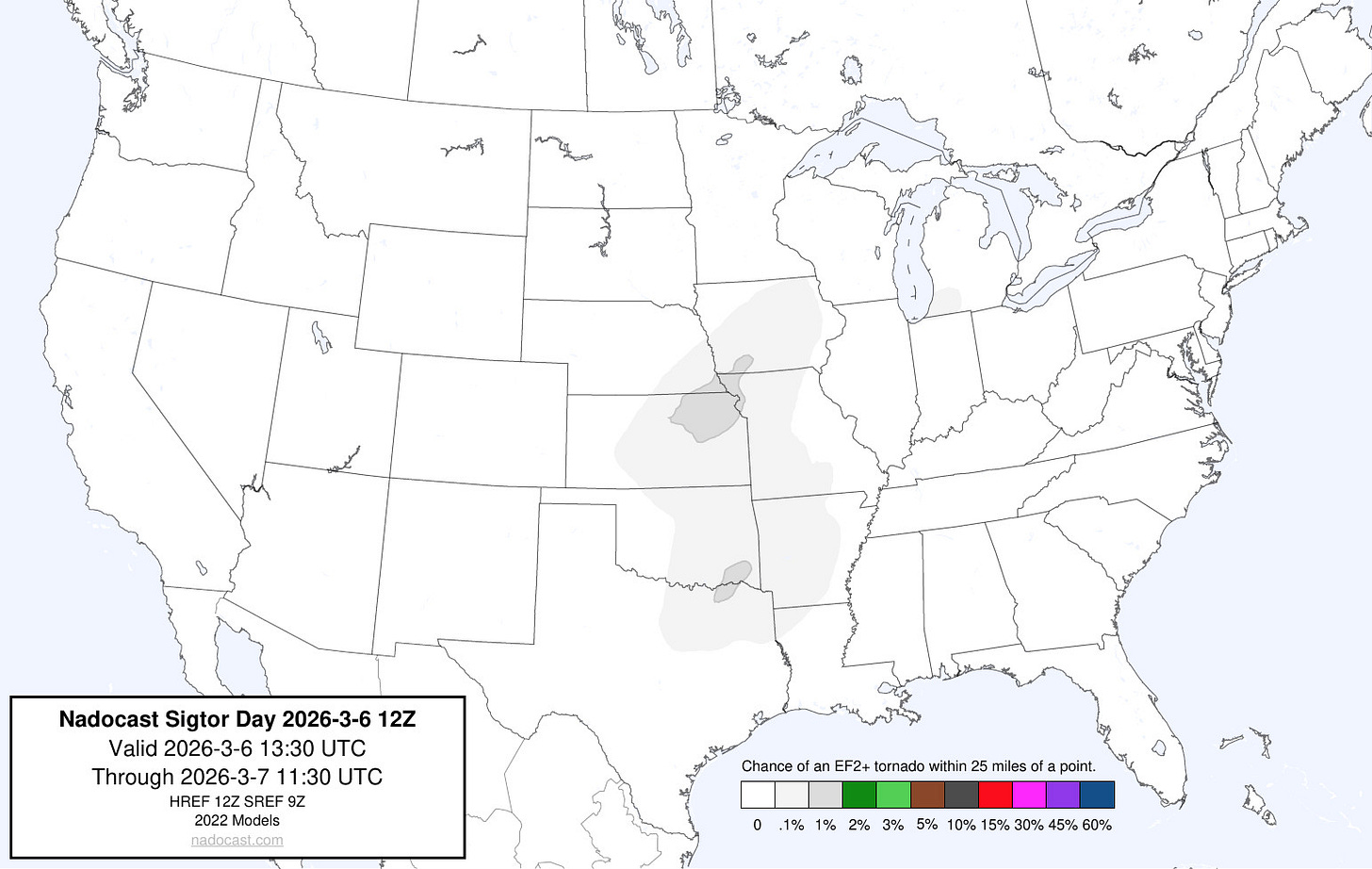

Later updates issued by SPC such as this one in the late morning actually pulled the CIG1 area farther west, meaning that no part of Lower Michigan was included. From my understanding of the conditional intensity group forecasts, EF3+ tornadoes occurring outside of a CIG1 area should be exceedingly rare if the SPC forecasts are well calibrated, i.e., about on the order of 1 out of every 100 EF3+ tornadoes should happen outside of a CIG1 area.

The local office responsible for warnings and forecasts for southwest Lower Michigan, the NWS Northern Indiana office, did discuss in their early morning area forecast discussion some concern for more robust severe weather potential:

At this time there is consensus that we should see conditions improve for thunderstorm development this afternoon, especially toward the late afternoon and early evening looking at the HREF and individual CAMS (note, convective allowing or high resolution models). If storms can become more surface based all hazards are in play with the 50 knots of shear and ample storm relative helicity seen in the hodograph profiles, the highest risk will be in southwest Michigan along the I-94 corridor potentially up to I-96 where CAMS show storms becoming more surfaced based.

However, the actual “key messages” from the office — the daily summary of main points for the public and partners to be aware of issued at 3 am and 3 pm (just before the tornadoes) — did not mention these concerns:

• Record or near record high temperatures this afternoon.

• Scattered showers and storms (20-50%) this afternoon into this evening, mainly north of US 24 corridor. A few storms could produce small hail and wind gusts in excess of 40 mph.

• A round of showers and storms (80%+) tracks through later tonight into Saturday morning. A few strong to severe storms are possible with the threats of isolated wind gusts in excess of 50 mph and marginally severe hail.

• Dry weather returns for Sunday and Monday, but more showers and storms expected for Tuesday into Wednesday.

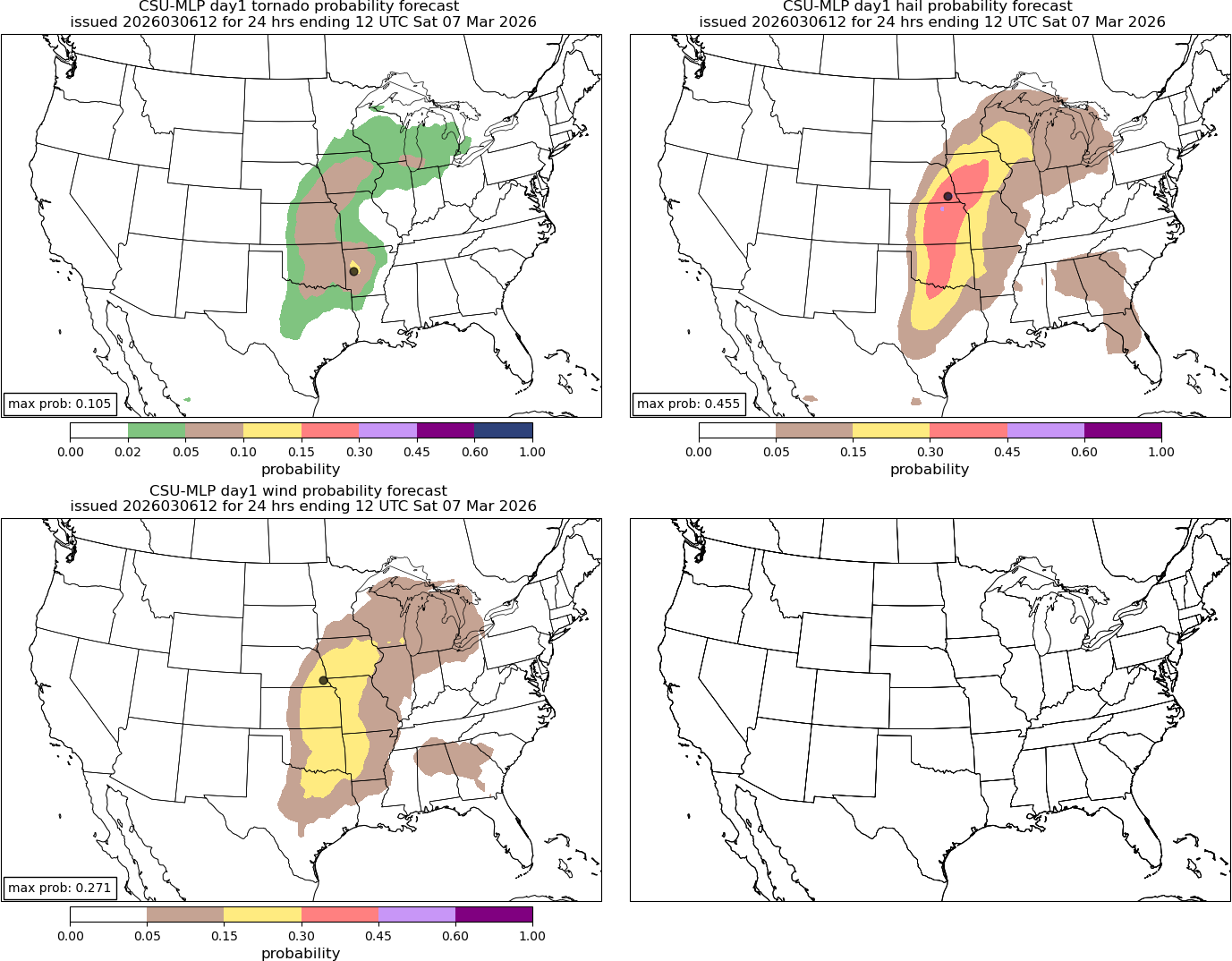

I am not going to go again into the meteorology of what made this a low probability tornado setup that had some higher end surprise potential — I refer you to my posts Friday night and Saturday for details — but I think it is important to recognize that the automated guidance available also did not show particularly high tornado probabilities either. The Colorado State University Machine Learning Probabilities — which I frequently share and find to be a good tool — only had 2% tornado probabilities in the region with a small 5% area over southern Lake Michigan.

Another commonly used AI tool called Nadocast also showed low probabilities — and its significant tornado probabilities were very low (.1%). The bottom line to me is that both subjectively and objectively this appeared to be a low probability tornado situation — but with some reason to be concerned about higher end potential if a number of factors developed into more of a worst case scenario (as I talked about in my meteorological posts linked above).

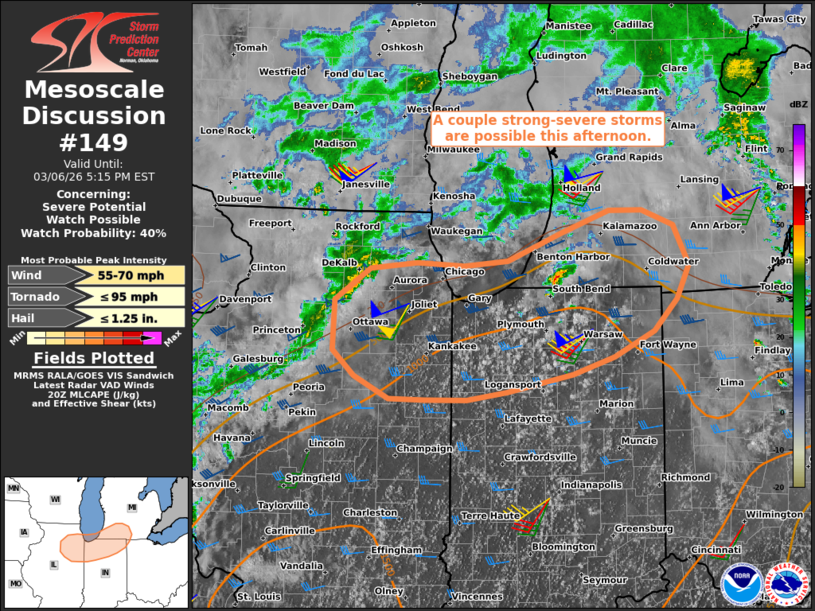

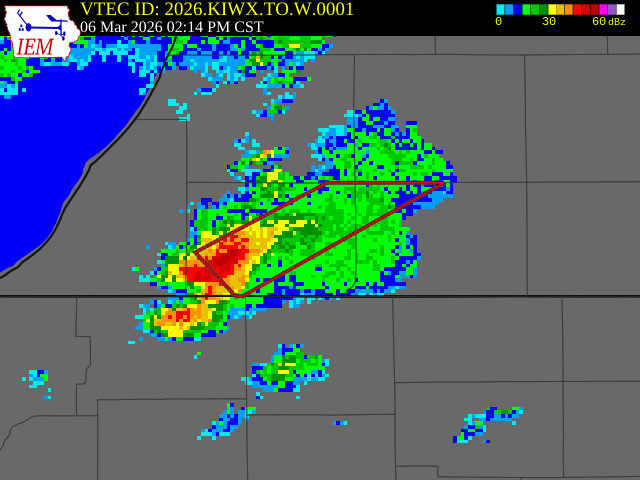

Even as the event got closer, the ultimate outcome was by no means clear. Once a supercell thunderstorm developed and moved across northwest Indiana, SPC issued this mesoscale discussion at 2:10 pm discussing the situation and the potential for a watch (severe thunderstorm or tornado) to be issued. As you can see, the watch probability was only 40% and ultimately no watch was issued. Of course, we know now what actually ended up happening: 3 tornadoes occurred, two of which were significant and one of which was a high end EF3 tornado that killed 3 people. The initial tornado near Edwardsburg, an EF1, killed a fourth person — a 12 year old boy.

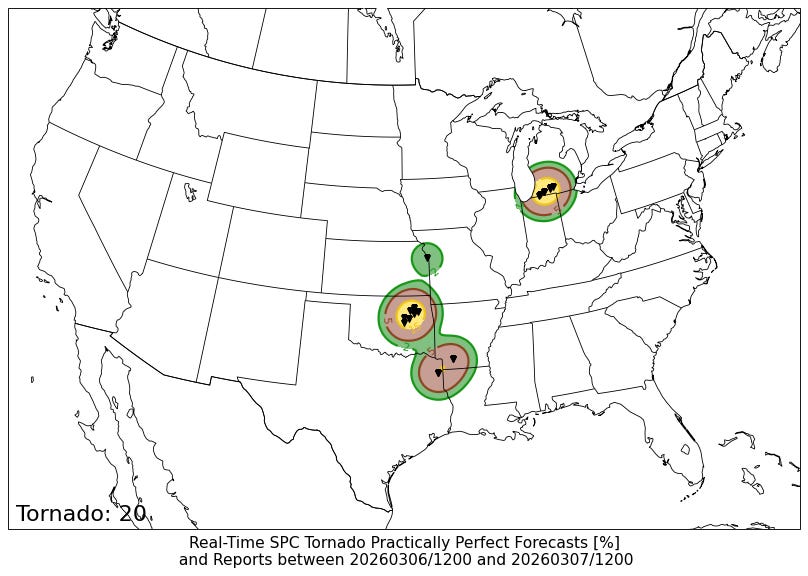

One of the ways in which we can examine the quality of a probabilistic severe weather forecast objectively is by comparing it to a “practically perfect forecast (PPF),” a technique in which we take the actual observed severe weather reports and map them to generate what the “perfect” probabilistic forecast should have been. For this event, the PPF tornado probability over southern Lower Michigan for Friday was 15% — obviously much higher than the 2% actually forecast. A 15% tornado probability area on an SPC forecast would equate to a categorical enhanced (level 3 of 5) outlook — and if a CIG2 were associated with it which was certainly viable given the EF3 tornado that occurred, it would be a moderate (level 4 of 5) risk.

My bottom line is this: given the fact that the actual coverage and intensity of tornadoes verified at an enhanced to moderate outlook level and that there was no watch of any kind (severe thunderstorm or tornado) for an event in which 2 killer tornadoes and 2 EF2+ tornadoes occurred, I think it is more than reasonable for core partners and members of the public to view this as a poorly forecast event. We as meteorologists should be open to that criticism and not use the fact that we use probabilities (and to be clear, we should be using probabilities!!) to convey our forecasts as a cop out to deflect from that conclusion.

Rather, we should use this opportunity to double down on explaining to the public why we use probabilities, examine ways to communicate probabilistic forecasts and these challenging forecast situations to the public more effectively, and critically examine our forecast processes and objective guidance to try to find ways to improve the actual forecasts. If we as the meteorological community are going to (correctly) say that our forecasts and warnings are a matter of life and death when defending their importance to the public and politicians, we need to internalize that perspective and be willing to look at our services critically and listen to the concerns and criticisms of partners and the public.

Now, that brings me to what is perhaps the most difficult and potentially controversial part of this article — namely the initial tornado near the town of Edwardsburg. According to the initial NWS survey, this tornado started at 3:11 pm EST 2 miles west-northwest of Edwardsburg. Very shortly after developing, the tornado struck a home destroying an attached garage and damaging the front of the home — and it was here that the incredibly tragic death of a 12 year old boy happened. The tornado continued for another 13.4 miles until 3:35 pm, ending near Shavehead Lake. It was rated an EF1 by the NWS with maximum winds of 95 mph.

The first several minutes of this tornado was not preceded by any type of a warning from the local NWS office – severe thunderstorm or tornado. A tornado warning was not issued until 3:14 pm EST as shown above.

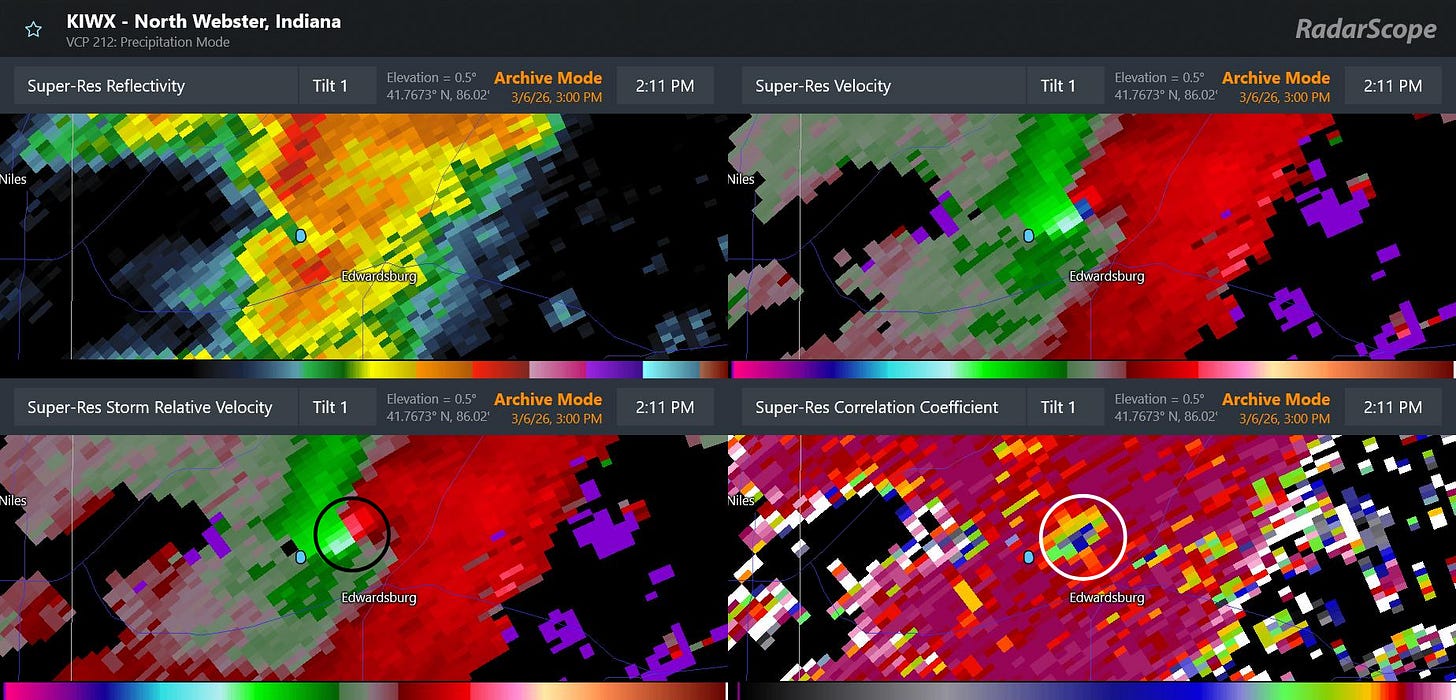

This is a four-panel radar image of the supercell severe thunderstorm responsible for the tornado from 3:11 pm EST (2:11 pm CST as shown on the radar product). It shows an intense rotational velocity “couplet” (black circled area) to the north-northwest of Edwardsburg, and a clear sign of debris lofted up to at least 5,000 feet (white circled area). This is the data that likely triggered the issuance of the tornado warning at 3:14 pm — although that warning did not state that the tornado was “radar confirmed” in spite of the clear indication here.

The small blue dot that you can see on the four panel image above to the southwest of the tornado signatures is the latitude and longitude of the start point listed in the initially released NWS damage survey. It is of course clear that the tornado is already past that point at 3:11 pm — the time the NWS said the tornado started. Given that fact and that the radar is already detecting debris up to 5,000 ft at 3:11 pm suggested to me that the tornado started at least a couple of minutes earlier.

While this may not seem like a huge deal, with an unwarned tornado every minute matters as far as public perception and NWS verification statistics. This error was apparently just that, an error – NWS Northern Indiana late this afternoon issued a corrected survey changing the start time to 3:09 pm. However, to me this situation is a perfect example summarizing many of the reasons I presented in my post last week about why an impartial entity is needed to independently gather hazardous weather reports and impacts and evaluate NWS warnings and services. There is a clear conflict of interest in having the staff that has the responsibility for the tornado warning for a storm determine the timing and intensity of the tornado in question.

Again, I say this as someone who was responsible for these exact processes for more than a decade as an NWS office manager — I am by no means accusing anyone of anything nefarious with this or any other severe weather verification situation, but I do not think anyone can deny that at least the optics of these processes are very questionable. The database of observed hazardous weather impacts are vital to our society and economy for many reasons beyond just verifying NWS forecasts and warnings — and as such, they should be collected and certified by an entity that does not have “skin in the game” as far as the data being used to analyze the efficacy of its core services.

Finally, that brings me to why no sort of warning was issued on this storm prior to it already being clearly tornadic as shown having a tornadic debris signature on radar. Obviously, there is no way for me to answer that definitively — and at this point it is hard for me to analyze the radar data like I would have been doing in real-time without knowing what was about to happen. Having said that, there were pretty clear indications that this storm was a defined rotating supercell as early as a bit before 3 pm EST (2 pm CST on the radar product time), which in my opinion likely should have resulted in at least a severe thunderstorm warning given the environment.

While I cannot say with certainty why no warning was issued here, I do think it is unlikely that it was due to staffing reductions. Obviously, we have already discussed ad nauseum that this was a rather challenging setup meteorologically. Furthermore, concerns with NWS severe weather warnings are not a new issue since the staff reductions of the last year. Last July, the Department of Commerce Office of the Inspector General (OIG) released the final results of an independent evaluation of NWS tornado warning performance that had started well before the 2024 election. You can read the full report here, but this is the key finding from the summary at the start of the report:

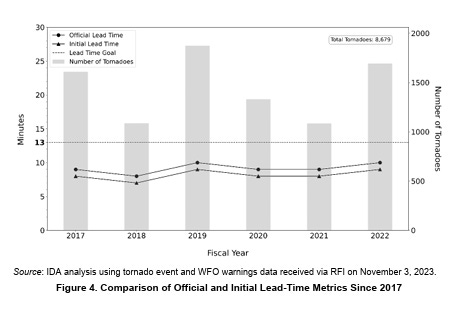

Since fiscal year (FY) 2011, the NWS has achieved its Government Performance and Results Act (GPRA) performance goal for false alarm ratio in 9 of the last 12 years. However, the NWS has consistently fallen short of meeting performance goals for probability of detection and warning lead times for this same time period. In addition to the NWS’s limited effectiveness in forecasting and warning performance, NOAA lacks official outcome metrics to assess progress toward the TWIEP (ed. note, Tornado Warning Improvement and Extension Program) goal of reducing the loss of life and economic losses from tornadoes, leaving this goal unmet without further improvements.

After the major tornado outbreaks in 2011, the NWS began to focus more heavily on the goal of reducing false alarms, i.e., the issuance of tornado warnings in which no tornado occurs. While this was not an official mandate, the results of the Joplin, MO tornado service assessment led to a sense in the agency (as a reminder, I was a meteorologist-in-charge within the NWS at this time) that false alarms were creating a “cry wolf” phenomenon that was making the public less responsive to warnings.

As you can see by the report summary I quote above, the NWS was successful in reducing false alarms, and began meeting its own performance goal for false alarm rate. The problem, however, is that the NWS stopped meeting its goals for probability of detection, i.e., for having a tornado warning in effect before a tornado occurs, and for lead time, i.e., the amount of time an individual receives a warning before being impacted by a tornado. For many of us in the NWS and broader community, this was unsurprising, as most analyses indicated that it would not be possible to reduce false alarms without negatively affecting detection without significant influx of new science or technology. In other words, the overall warning approach was relatively close to the state of the science, so the only way to reduce false alarms was to reduce the number of warnings — thereby also likely reducing detection and lead time.

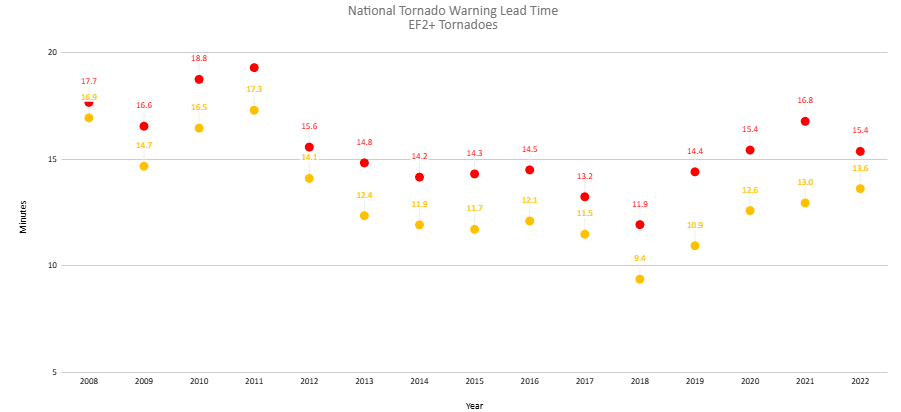

While I have heard some of my colleagues blame the reduction in POD and lead time on the fact that we are detecting and reporting a greater number of weaker tornadoes today that used to go unreported, I do not believe the science necessarily backs that up. An analysis I did in 2023 showed that the national tornado warning lead time had not diminished since 2011 just for all tornadoes — it had also diminished specifically for stronger tornadoes, which should not be the case if the reduction was due to detection of more weak tornadoes. In the period 2008-2011, the national average annual initial lead time of a tornado warning (above) for an EF2+ tornado was never lower than 14.7 minutes. It has never been that high since 2011, and was as low as 9.4 minutes in 2018 before recovering somewhat in recent years.

I will likely have more to say about all of this in future posts, but I want to use this post to make a couple of overarching points of what is needed in my opinion to improve the NWS tornado warning program. As Sean Ernst highlights in his thread today, there is an asymmetric penalty function inherent in tornado warnings: “perceived repeated false alarms can chip away at trust but a single perceived missed event can greatly reduce trust.” (emphasis added)

In other words, false alarms can indeed be an issue over time — although social science studies show that experts contextualizing and communicating the reasons for them can help offset the negative impacts. On the flip side, not having a warning in effect when a tornado occurs means that nobody can take advanced preventative action for the threat based on a warning and when negative impacts result — particularly something as grievous as the death of a child — the implications on trust in NWS can be profound.

At a programmatic high level, I believe NWS leadership must reinforce that the focus of the NWS tornado warning program should be on the maximizing of detection and lead time. Of course, the NWS should make all efforts to minimize false alarms and continue to work to keep the false alarm rate as low as possible — but the clear focus of the goals of NWS training and research on severe storms should be to, as recommended in the OIG report, improve probability of detection and lead times.

Perhaps even more importantly, the NWS must develop specialists whose sole role is the issuance of warnings and near term (0-3 hour) forecasts of severe storms. When the NWS modernized its operational structure and installed the NEXRAD Doppler weather radar network in the 1990s, it created Weather Forecast Offices where local meteorologists were responsible for issuing short fused warnings (i.e., tornado, severe thunderstorm and flash flood) for their service area. At that time, there really was no alternative structure — the volume of data each radar produced and the state of communications networks at that time meant that the radar data needed to issue accurate short fused warnings was only available locally. Every operational meteorologist in the NWS went through a multi-week resident course to be trained on the meteorology and knobology needed to be a warning meteorologist.

Fast forward to today — I literally have all of the meteorological data at my disposal to be able to issue short fused warnings for any location in the country. Additionally, over the last 30 years, the science and technology surrounding severe thunderstorm analysis and forecasting has advanced tremendously and become much more complex: expertise regarding dual-polarization radar data, high resolution satellite data, convective allowing models, mesoanalysis techniques and new theories of tornadogenesis are just some of the key areas that a top-level warning meteorologist should have in their toolbelt. The idea that all of the 1000+ meteorologists can be true experts in all of these areas is, in my opinion, unachievable.

Furthermore, various projects over the years in NOAA testbeds and proving grounds and analysis of warning statistics have shown that meteorologists who deal with severe weather more frequently become more proficient at the warning and forecast process. A meteorologist in Norman or Jackson who deals with severe weather literally dozens of times a year is — all other things being equal — going to have more experiential skill than a meteorologist in Montana or New England who deals with it more infrequently. However, the public and partners in Montana and New England should expect the same level of service from the NWS as someone in Oklahoma or Mississippi whenever their areas are threatened by severe weather.

In my opinion it is well past time for the NWS to move to an operational warning paradigm in which meteorologists that specialize in severe weather — and have that as their sole operational focus — play a key role in the warning process. We do not rely on general practice medical doctors to treat people with serious heart conditions or cancers — and the idea that we rely on general practice meteorologists to be the sole issuers of severe weather warnings seems to me just as silly today. I completely understand that the NWS will likely want to keep warnings flowing from local offices — there are good social science reasons for this — but given the ubiquitous availability of the meteorological data, there is no reason why an operational paradigm cannot be developed in which regional offices with severe weather specialists for their given area could have overall coordination and collaboration responsibility and ensure that timely and accurate warnings and short term thunderstorm hazard forecasts are flowing to the public. When outbreaks occur, specialists from around the country would be able work together with local offices to ensure that the affected citizens are receiving the absolute best warning and forecast services possible — collaborative support that would be particularly beneficial and crucial in those areas where severe weather is more infrequent.

This post has become incredibly long — so I am going to wrap it up here. Again, I have touched on many issues, and because of that I am going to open comments and questions to any and all. Hopefully, my passion for this topic has come through, and if you have made it this far, you truly understand that my goal with this article is not about blame or assigning fault for any perceived shortcomings in forecasts or programs. Rather, to me the issuance of hazardous weather forecasts is the most important thing that the meteorological community does — and the warnings and short term forecasts for rapidly developing and evolving hazards such as tornadoes and flash floods are the most complex and time sensitive aspects of that hazardous weather mission. It is vital that we as a community work together to dispassionately evaluate our forecasts and services, and to do what it takes to continually improve. This is also why the concept of an independent review entity for weather disasters — and indeed all disasters — is so important in my opinion.

Leave a comment